so i been struggling with ugly fonts in 8K and couldn’t figure out what was causing it …

just fixed it today. it was the “game mode” on Sony TV … changed it to “graphics mode” and text was instantly beautiful …

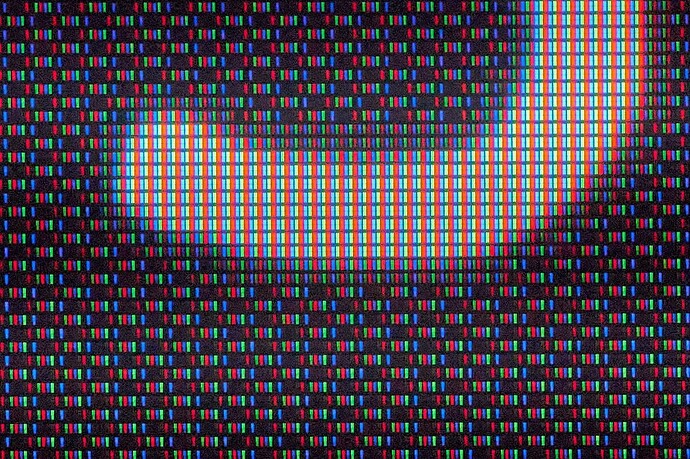

except now i can actually see reflections between different layers of the screen. the text itself is displayed sharp and clean in terms of pixels but then it reflects through various wide angle viewing diffusers and such and in the end i end up seeing like a ghost version offset by may be about 1/4 millimeter depending on what angle i’m looking at.

if i’m looking dead on at 90 degrees the offset disappears and text is razor sharp. if i’m looking at an angle the text is doubled.

of course when you sit close to a large screen you can only see maybe 1/4 of the screen head-on, while most of the screen will actually be at an angle.

so it’s an issue you will probably want to check for when getting a 8K TV …

overall there are many such weird issues that pop up with 8K, the other is dither applied for viewing angles for example and so on …

basically manufacturers assume, just like @kimkardashian does, that nobody actually sees 8K so they start to do all sorts of fuckery on a pixel level either to improve viewing angles or to decrease processing latency and so on …

but you can actually see some of that shit when you sit 4 feet way from the screen like me …

once you find the right settings though, even though it still may not be perfect, it’s a noticeable upgrade from 4K …

still nowhere near as sharp as your phone’s screen though … we’re talking about 100 pixels per inch on a 75" 8K screen versus 400 pixels per inch on a phone or about 250 pixels per inch on a tablet

i can’t see pixels on a phone from any distance. i can barely see something not quite perfect on a tablet. on a 75" 8K screen when it is working properly i can see artifacts up to about 3 feet from it. when it is not set up right or when there are some unintended reflections between films etc. those can be seen for maybe up to about 6 feet away.

of course i actually sit 4 feet away so the only artifact i’m seeing right now is those reflections between internal films. after switching to “graphics” mode i can’t see the pixel level weirdness anymore from my viewing distance.

i am still looking forward to 16K screens. with 16K you would be able to sit 3 feet away from a 100 inch screen and have it perfectly sharp. can’t do that with 8K.

for 16K to make practical sense though it would probably need to curve around you, and for that to make sense the aspect ratio would probably need to be wider.

in other words realistically i only want maybe 70% more lines or resolution vertically and horizontally if everything stays the same except size. but with with a wide, curved display i could make use of maybe as much as 250% more lines of resolution horizontally, though i would have to turn my head to see the edges of the screen, but that is fine.

i would also want to have 600 hz refresh rate instead of 60 hz.

i give it another 20 years and we will get there.